TL;DR — In April 2026, Claude (Opus 4.7) wins on coding, long-context work, and natural writing, while ChatGPT (GPT-5.4) wins on ecosystem scale, multimodal tools, and raw market share. Claude occupies 3 of the top 4 spots on the LMSYS Arena leaderboard, leads SWE-bench Verified at 78.7–80.8%, and is preferred by roughly 70% of developers for coding. ChatGPT still commands 60%+ of global AI chatbot traffic with over 900 million weekly active users. If you write code, long documents, or nuanced prose, pick Claude. If you need multimodal flexibility, plugins, and the widest integration ecosystem, pick ChatGPT.

Why the ChatGPT vs Claude Debate Exploded in 2026

Search volume for “ChatGPT vs Claude” is up 11× year over year in early 2026, and it’s not a coincidence. The gap between these two models has never been tighter — or more consequential for professionals making daily workflow decisions.

Through 2023 and 2024, ChatGPT was the default answer for almost any AI use case. That changed through 2025 as Claude quietly picked up a reputation for better coding output and more natural writing, and it accelerated in 2026 when Anthropic shipped Opus 4.6 in February and Opus 4.7 on April 16. In the same window, OpenAI shipped GPT-5.4, keeping the leaderboard tight and the debate alive. The short version: both are excellent, both cost $20/month on the consumer tier, and the right answer depends almost entirely on what you actually use AI for. The rest of this post gives you the hard data to decide.

The Head-to-Head Data (April 2026)

LMSYS Chatbot Arena — Crowdsourced Blind Battles

The LMSYS Arena (openlm.ai) is the single best real-world signal of model quality because it’s based on blind head-to-head battles from millions of anonymous users, not cherry-picked benchmarks. As of April 2026, Anthropic’s Claude models occupy three of the top four spots on the overall leaderboard and lead every variant of GPT-5 on coding Elo.

| Rank | Model | Provider | Overall Elo | Coding Elo |

|---|---|---|---|---|

| 1 | Claude Opus 4.7 Thinking | Anthropic | 1505 | 1565 |

| 3 | Claude Opus 4.7 | Anthropic | 1503 | 1554 |

| 4 | Claude Opus 4.6 Thinking | Anthropic | 1503 | 1545 |

| 6 | GPT-5.4-high | OpenAI | 1495 | 1538 |

| 8 | Claude Opus 4.6 | Anthropic | 1490 | 1535 |

The takeaway: Anthropic dominates the frontier. GPT-5.4-high is competitive — about 10–30 Elo behind on coding — but Claude wins the top of the board on both overall quality and coding.

Coding Benchmarks (SWE-bench and Real-World Accuracy)

Coding is the single most discussed sub-topic in the ChatGPT vs Claude comparison in 2026, because it’s where the switching pressure is strongest. Here’s the benchmark picture on the tests that matter.

| Benchmark | Claude (Opus 4.6 / 4.7) | GPT-5.4 / GPT-5.4-high | Winner |

|---|---|---|---|

| SWE-bench Verified (real GitHub issues) | 78.7–80.8% | 76.9–80.0% | Claude (+1 to +4 pts) |

| SWE-bench Pro (Opus 4.7) | 64.3% | 57.7% | Claude (+6.6 pts) |

| MCP-Atlas (tool invocation) | Opus 4.7 leads | — | Claude (+9.2 pts) |

| Functional Coding Accuracy (real-world) | ~95% | ~85% | Claude (+10 pts) |

| GPQA Diamond (PhD-level reasoning) | 88.8–90.5% (thinking) | 91.4% (xhigh) | Near tie |

| Developer Preference (coding, 2026 surveys) | 70% | 30% | Claude |

SWE-bench Verified uses real GitHub issues from production repositories — not toy problems — which makes it the closest proxy anyone has for actual developer performance. Claude passing GPT on this benchmark after trailing it through all of 2024 is the single biggest shift in the AI tooling market this year.

The gap widens on functional coding accuracy — in real multi-file refactors and production-grade code tasks, Claude hits ~95% correctness versus ~85% for GPT-5.4. That 10-point margin is the reason roughly 70% of developers now prefer Claude for coding tasks, based on 2026 industry surveys. Claude makes fewer hallucinations, handles larger codebases more reliably, and preserves context across multi-file edits better than GPT-5.4.

On pure reasoning, GPT-5.4-high actually pulls slightly ahead on GPQA Diamond at 91.4% — so for abstract academic reasoning, OpenAI still has an edge. But “coding wins” is a professional workflow decision; “GPQA Diamond wins” is an academic edge case. If you want the fuller picture, our Claude Opus 4.7 and feature deep-dive walks through what’s actually new in the latest Claude release, and the April 2026 AI writing tools update covers all the model moves this month in context.

Market Share and Usage (Who’s Actually Winning the Numbers Game)

A lead on benchmarks doesn’t translate to a lead on users — at least not yet. ChatGPT still crushes on pure scale.

| Metric | ChatGPT (OpenAI) | Claude (Anthropic) | Notes |

|---|---|---|---|

| Global AI chatbot web traffic share | 60.4–64.5% | 2.0–4.5% | ChatGPT #1, but down ~22 pts YoY |

| AI search / chat market share | ~60% | 3.2–4.9% | Gemini surging to 13–25% |

| Weekly active users | >900 million | Not disclosed | ChatGPT leads raw volume |

| U.S. mobile app daily users | 45.3% share (↓ from 69%) | Briefly overtook ChatGPT Feb–Mar 2026 | Claude higher time-per-user (34.7 min avg) |

ChatGPT is still the biggest AI product in the world by a wide margin. It has the widest integration ecosystem, the most plugins and tools, the most enterprise deployments, and the most mindshare. But the trend line is telling: ChatGPT lost roughly 22 percentage points of market share in 12 months, and Claude briefly overtook it in U.S. app store daily users in February and March 2026.

Claude isn’t going to surpass ChatGPT in raw scale any time soon — but it doesn’t need to. What matters for most professionals is which tool delivers the best output for their specific workflow, and Claude’s premium growth among developers, writers, and long-document workers is the story driving that 11× search volume spike. For a broader view of how these tools fit into solo workflows, see our best AI tools for solopreneurs and AI tools for freelancers roundups.

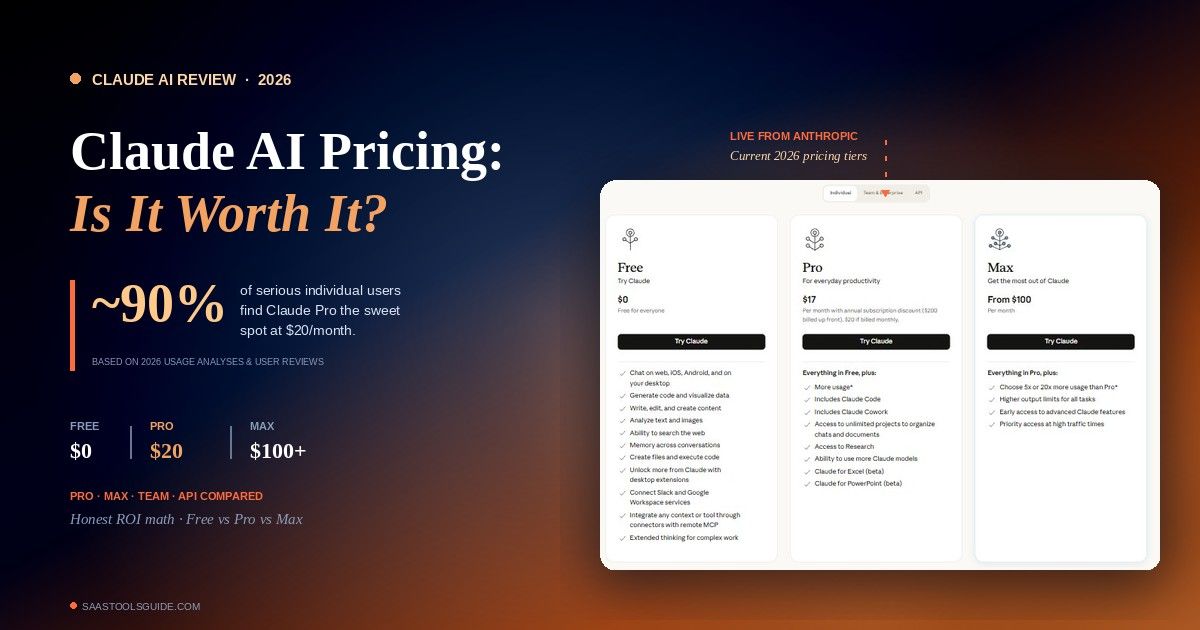

Pricing and Practical Specs

| Feature | Claude (Sonnet 4.6 / Opus 4.6) | ChatGPT (GPT-5.4) | Winner |

|---|---|---|---|

| Context window | 200K standard (1M on Opus) | 128K standard (1M on some) | Claude |

| Consumer price | $20/month (Pro) | $20/month (Plus) | Tie |

| API input / output (Sonnet tier) | $3 / $15 per 1M tokens | $2.50 / $10–15 | ChatGPT (slightly) |

| Output quality (writing) | Superior natural prose | Strong versatility | Claude |

| Multimodal tools (image gen, voice, video) | Solid | Market-leading | ChatGPT |

At the consumer tier, pricing is a wash — both $20/month. On the API, ChatGPT is marginally cheaper per token on equivalent tiers, which matters if you’re running heavy automation or building a product on top. On context window, Claude’s 200K standard (with 1M on Opus) comfortably beats ChatGPT’s 128K standard — if you work with long documents, legal files, full codebases, or research papers, this is the biggest practical difference between the two.

Blind human tests across 134 voters and 8 rounds saw Claude win 4 of 8 rounds against ChatGPT and Gemini, with ChatGPT winning 1. That’s not a knockout, but it confirms the benchmark story: when you strip away the brand and just judge output quality, Claude is winning the quality war right now.

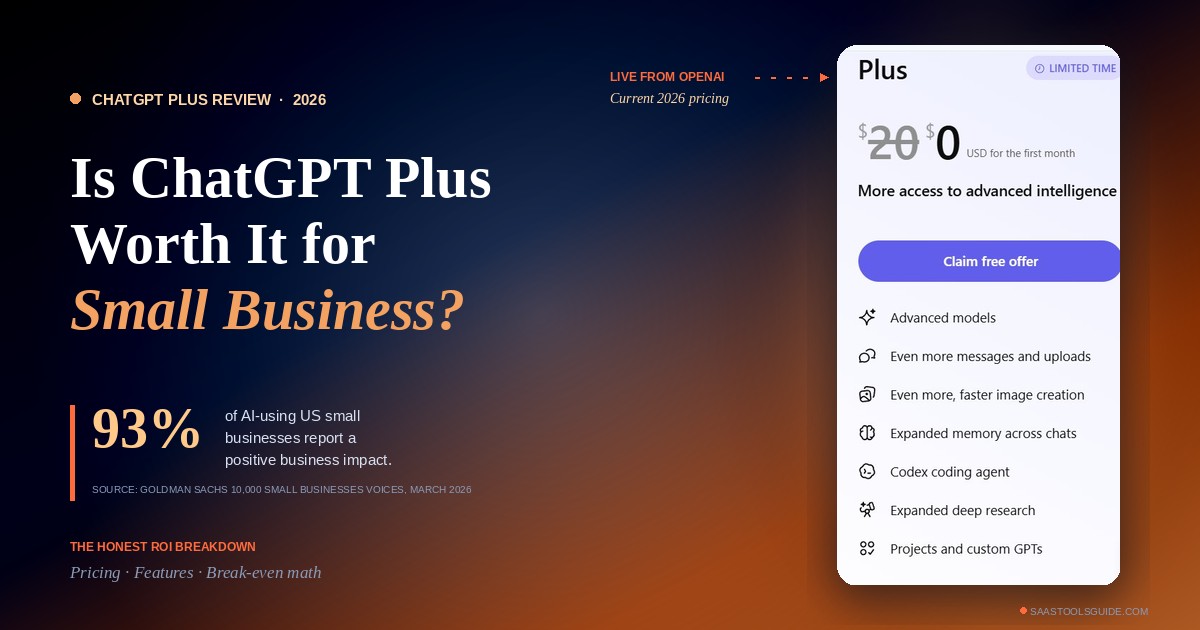

When to Choose ChatGPT

Pick ChatGPT (GPT-5.4) if your work looks like any of these. You need heavy multimodal capability — image generation, voice mode, or video workflows — where OpenAI’s ecosystem is simply ahead. You rely on plugins, GPTs, or integrations with third-party apps; the GPT Store and broader OpenAI plugin ecosystem is much larger than Claude’s.

You work across consumer-facing tools where broad familiarity matters (customer support, casual content, everyday productivity). You care about API cost optimization at scale — ChatGPT’s per-token pricing is slightly lower on equivalent tiers, which compounds over millions of API calls. You need SimpleQA-style factual grounding — GPT-5.4 still has an edge on strict factual recall benchmarks. You’re building a product that requires the widest possible integration surface, because ChatGPT has the largest third-party tool ecosystem by a significant margin.

When to Choose Claude

Pick Claude (Opus 4.7 or Sonnet 4.6) if any of these describe your work. You write code — especially multi-file refactors, production codebases, or agentic coding workflows where Claude leads on SWE-bench Verified, SWE-bench Pro, and MCP-Atlas. You work with long documents — legal contracts, research papers, long-form books, or anything over 50 pages — where Claude’s 200K standard context (and 1M on Opus) handles what ChatGPT’s 128K can’t. You write prose for publication — essays, articles, marketing copy, editorial content — where Claude’s natural-sounding output consistently beats ChatGPT in blind human tests.

You care about reducing hallucinations in load-bearing work, where Claude’s ~95% functional coding accuracy versus ChatGPT’s ~85% matters. You need careful, nuanced reasoning on novel problems — Claude’s “thinking” variants on the LMSYS Arena lead on both overall and coding Elo. You handle enterprise documents with strict brand voice requirements — Claude’s April 2026 Brand Voice Lock feature enforces style guides at the API level, which is a meaningful enterprise differentiator.

The “Both” Answer Most Professionals End Up With

Here’s the practical reality: most power users we’ve talked to in 2026 end up paying for both. At $20/month each, running Claude Pro and ChatGPT Plus together is $40/month — barely a rounding error for any professional whose productivity is tied to AI output. The workflow that’s becoming standard: Claude for coding, long documents, and first-draft writing. ChatGPT for image generation, voice, quick factual lookups, and tasks where a specific plugin or GPT already exists. You open whichever tab matches the task and stop fighting about which is “better.” The freelancers and solopreneurs who are billing more per hour in 2026 aren’t the ones who picked the right AI. They’re the ones who stopped treating this as a single-winner decision.

What’s Changing Through the Rest of 2026

Three trends worth watching as the comparison evolves. Pricing compression is accelerating — GPT-5.4 cut API prices in March, Claude Opus 4.7 held the line but introduced a 50% batch API discount. By Q3 2026, expect “unlimited AI words” to be a $9–$19 baseline rather than a $49 premium.

Agentic capabilities are where the next real gap will open. Claude Opus 4.7’s MCP-Atlas and OSWorld-Verified lead suggests Anthropic is pulling ahead on autonomous agents, tool use, and long-horizon tasks — exactly the shape of work that will dominate 2027 AI products.

Multimodal parity will close. ChatGPT has led on image, voice, and video, but Claude has been steadily shipping multimodal upgrades (Opus 4.7 added 3.75MP vision input). The gap that exists today won’t exist this time next year. None of this changes the April 2026 answer — Claude for quality-sensitive work, ChatGPT for scale and ecosystem — but it does mean you should revisit the comparison every six months.

Frequently Asked Questions About chatgpt vs claude

Is Claude better than ChatGPT in 2026?

For coding, long-context work, and writing quality — yes, Claude is measurably better in April 2026. It leads on SWE-bench Verified, SWE-bench Pro, LMSYS Arena coding Elo, and blind human writing tests. For raw scale, multimodal tools, and third-party integrations, ChatGPT still leads. Neither is universally “better” — it depends on your workflow.

Which AI do developers actually prefer — ChatGPT or Claude?

Around 70% of developers surveyed in 2026 prefer Claude for coding tasks, especially multi-file refactoring, production codebases, and agentic coding workflows. This is a significant shift from 2024 when ChatGPT was the developer default. The preference is driven by fewer hallucinations, better multi-file context handling, and higher SWE-bench Verified scores. Our full AI tools for freelancers guide breaks down how this is reshaping freelance developer workflows.

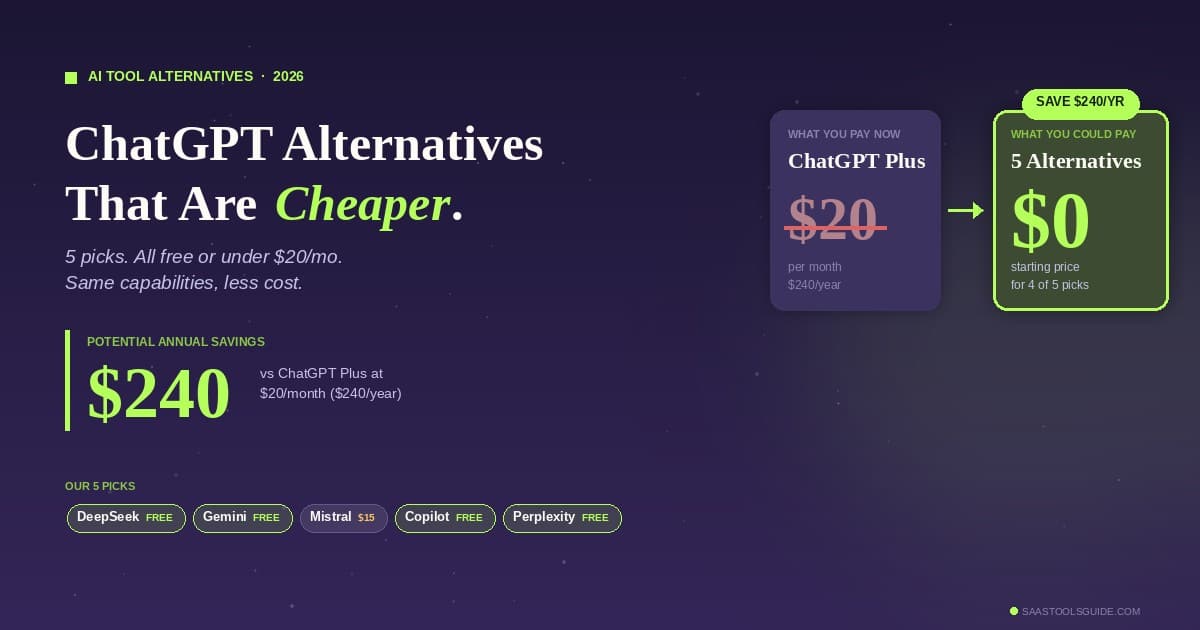

Is ChatGPT or Claude cheaper?

At the consumer tier, both are $20/month — a tie. On the API, ChatGPT is marginally cheaper per token on equivalent tiers (around $2.50 input versus Claude’s $3 per million tokens). For heavy API usage, ChatGPT saves you money. For moderate use, the difference is negligible.

Does Claude have a bigger context window than ChatGPT?

Yes. Claude offers 200K standard context (1M on Opus tiers) versus ChatGPT’s 128K standard (1M on some tiers). If you work with long documents, large codebases, or research-heavy material, Claude’s context window is the single biggest practical advantage.

Can I use both ChatGPT and Claude together?

Yes, and most professional power users do. Running both Claude Pro and ChatGPT Plus costs $40/month combined and gives you best-of-both-worlds coverage — Claude for coding and long documents, ChatGPT for multimodal and plugin-dependent work.

Is Claude Opus 4.7 the same as Claude?

Claude is Anthropic’s AI brand, with three model tiers: Haiku (fast, cheap), Sonnet (balanced — the default), and Opus (most capable). Opus 4.7 is the current flagship model as of April 16, 2026. When someone says “Claude” without specifying, they usually mean the Sonnet tier. When comparing to GPT-5.4, Opus 4.7 is the fair head-to-head match.

Should I cancel my ChatGPT subscription and switch to Claude?

Not necessarily. If your primary use is writing, coding, or long-document work, Claude is probably worth the switch in 2026. If you rely on multimodal features, plugins, or specific GPTs you’ve built workflows around, keep ChatGPT. The safest move is to run both for a month at $40 combined and see which one you actually open more.

The Bottom Line

Every honest 2026 comparison arrives at the same conclusion: Claude wins on the quality metrics that matter most to professionals — coding benchmarks, long-context work, natural writing, and LMSYS Arena Elo. ChatGPT wins on scale, ecosystem, and multimodal breadth — the metrics that matter most for consumer adoption and platform leverage. The gap is narrowing, but the direction is clear: Claude’s share is rising, ChatGPT’s share is falling, and for most professional workflows the answer in April 2026 is Claude first, ChatGPT second. That’s not a forecast. It’s what the benchmarks, the LMSYS Arena, the developer surveys, and the blind human tests all say at the same time.

Still deciding? Start with Claude’s free tier for a week, then compare against ChatGPT’s free tier on the exact tasks you do every day. You’ll have your answer in under a week — no blog post required.

Related Reading on SaaS Tools Guide

- What Is Claude AI? Features, Uses, Benefits, and How It Works

- AI Writing Tools Updates April 2026 News: The Biggest Changes This Month

- AI Tools for Freelancers in 2026: The Complete Stack to Earn More & Work Less

- Best AI Tools for Solopreneurs in 2026: The Honest, Tested Stack

- Best AI Tools for Entrepreneurs in 2026: The Growth-Lever Playbook

- Best AI Tools for Business: Top Platforms to Boost Productivity and Growth